Date & Time: 2026-02-01 14:57:32

Instructor: Doctor Paul Walkin

Theme

The lecture contends that systems engineering is inherently messy and uncertain, yet the near future will be shaped by AI-enabled co-pilots, neurosymbolic methods, and evidence-based “maps” that enhance decision-making, requirements engineering, and lifecycle management—shifting from intuition to traceable, justifiable, and practical automation with tangible exemplars and sandboxes.

Key Point

- “All models are wrong” (George Box) anchors the philosophy: AI co-pilots support human decision-makers rather than replace safety-critical judgment.

- Requirements engineering is messy: ambiguity, conflicts (especially at interfaces), unknown unknowns, and translating human intent into constraints under uncertainty.

- Systems engineering is still largely document-centric; a transition to model-based practices with AI augmentation is underway.

- Future practice should be guided by evidence and scientific methods—creating “maps” for disciplined, quantifiable decisions rather than relying on gut feeling.

- Vision initiative “Systems Engineering Go on the Horizon,” sponsored by SERC and NHTSA, looks beyond digital engineering toward tangible exemplars, sandboxes, and capability roadmaps to 2035.

- AI-enabled requirements engineering is a core exemplar, linking data accessibility to decision effectiveness and efficiency, while requiring domain and AI fundamentals.

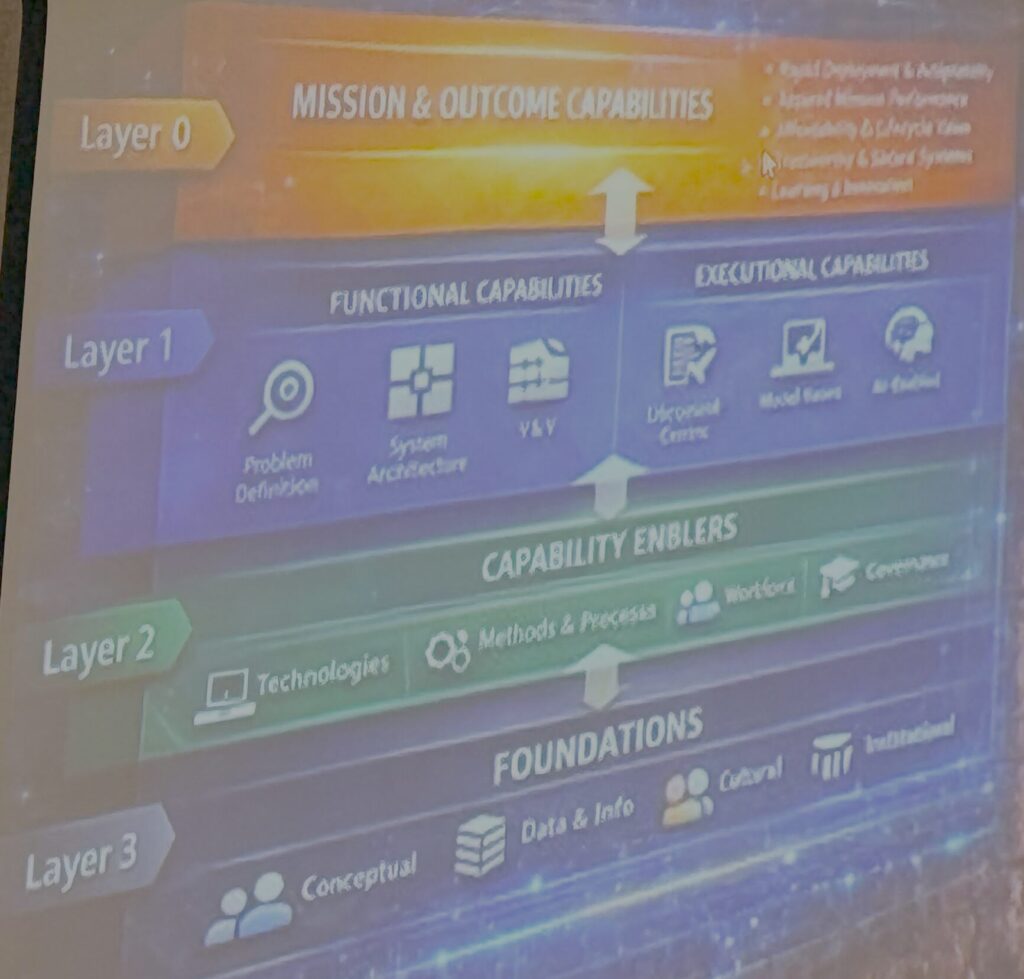

- Transformation framework: mission outcomes → functional/executional capabilities → enabling capabilities (technology, processes, methods, workforce, governance) → foundations (math and science-based approaches).

- Exemplars and tools: Houston (expert system/ontology), GenGrove (automated model creation and SME interaction), O bi-W an (acquisition compiler), and PLUMR (Public Lifecycle Management Co-Pilot) with live dashboards and ingest checks.

- Tangible live demos: rapid supply chain analysis; executable HTML in presentations; parallel simulations (mass-spring and electric circuit equivalence) to align concepts and math.

- Conclusion points toward neurosymbolic AI for gaming, mission engineering, and acquisition to achieve trustworthy specifications and traceable decisions—“speak friend and enter” as a metaphor to avoid overthinking what’s already evident.

Highlights

- “All models are wrong.” — George Box

- “Are we actually overthinking this or is some of the future like right there in front of us?” — Doctor Paul Walkin

- “Requirements engineering is really well understanding the human intent in something—again, we do this under uncertainty.” — Doctor Paul Walkin

- “We’re really shifting from drawing maps where you can continuously navigate with evidence.” — Doctor Paul Walkin

- “Not everyone is going to be an expert on System Level Two… we need something to translate the models so domain experts can provide decisions.” — Doctor Paul Walkin

- “Neurosymbolic is the path that makes the most sense to provide justifiable traceability to decisions that AI makes or recommends.” — Doctor Paul Walkin

- “As AI—password? No, not really. It’s really a trustworthy specification.” — Doctor Paul Walkin

Topics

1. Philosophy and Scope: AI Co-pilots in Systems Engineering

Conclusion: The lecture sets a non-safety-critical scope and emphasizes AI as decision-support co-pilots guided by George Box’s “All models are wrong,” prioritizing trust and human oversight.

- AI co-pilots assist human decision-makers, not replacing safety-critical judgment.

- George Box’s maxim underscores model humility and practical utility.

- Overlap and fringes are acceptable; the focus is enabling better decisions.

- The “speak friend and enter” metaphor cautions against overthinking straightforward, present solutions.

2. Requirements Engineering Under Uncertainty

Conclusion: Requirements engineering translates intent into constraints amid ambiguity, conflicts, and unknowns; AI can help clarify, connect, and manage these complexities.

- Ambiguity and conflicts are endemic; cross-organizational interfaces intensify conflicts.

- Unknown unknowns persist, even with risk management.

- Core task: translate human intent into constraints under uncertainty.

- AI tools can assist decision-makers within requirements processes.

- Suggestion: “Build AI tools that assist requirements engineers but demand foundational knowledge of AI and RE.”

3. From Document-Based to Evidence-Based, Model-Driven Practice

Conclusion: Industry remains document-based; transitioning to model-based and evidence-guided “maps” will enable disciplined, quantifiable navigation of complex decisions.

- Current practice is surprisingly document-centric.

- Shift toward model-based systems engineering.

- Build “maps” to navigate with evidence instead of intuition.

- Quantify discipline to reduce gut-driven decisions.

- Suggestion: “Establish scientific, evidence-driven decision maps to replace intuition.”

Program: Systems Engineering Go on the Horizon (SERC/NHTSA)

Conclusion: Sponsored vision work looks beyond digital engineering to 2035, delivering sandboxes, exemplars, and AI-enabled requirements engineering to make future capabilities tangible.

- Sponsored by the Systems Engineering Research Center and the National Highway Traffic Safety Administration.

- Beyond enterprise digital engineering; pursue tangible, workable artifacts.

- Create sandboxes and exemplars; align with Vision 2035.

- AI-enabled requirements engineering ties data accessibility to decision efficiency/effectiveness.

- Baselines and measurements of process efficiency are being documented.

5. Transformation Framework and Foundations

Conclusion: A layered framework connects mission outcomes to capabilities and foundational math/science, ensuring rigorous, traceable decisions and workforce evolution.

- Layers: mission outcomes → functional/executional capabilities → enabling capabilities (technology, processes, methods, workforce, governance).

- Roadmap of requirements technologies from current to cutting-edge toward 2035.

- Workforce must evolve with integrated capabilities.

- Mathematical and scientific foundations close capability gaps and justify decisions.

6. Exemplars: Houston, GenGrove, O bi-W an, PLUMR

Conclusion: Practical tools demonstrate rapid, ontology-based expert systems, automated modeling with SME interaction, acquisition compilation, and lifecycle co-piloting with data integrity checks.

- Houston: expert system powered by rules/ontology for requirements co-piloting.

- GenGrove: automates model creation and interactions; inputs include text, drawings; outputs include explainable models for non-experts.

- O bi-W an: acquisition compiler aligned to community processes; WIP with rapid development.

- PLUMR: lifecycle management co-pilot; dashboards ingest public data (e.g., LEGO kits), parts catalogs, assemblies, authority checks.

7. Live Demonstrations and Tangible Interfaces

Conclusion: Executable, live demos (supply chain, side-by-side simulations) within presentations make concepts tangible, align math with engineering intuition, and accelerate understanding.

- Rapid supply chain analysis tool built in ~30 minutes.

- Executable HTML in presentations avoids static slides.

- Dual simulations (mass-spring vs. electric circuit equivalence) illustrate aligned mathematical principles.

- “A picture is worth a thousand words” applied to system behavior visualization.

8. Toward Trustworthy, Neurosymbolic AI

Conclusion: The strategic direction is neurosymbolic AI (for gaming, mission engineering, acquisition) to deliver traceable, justifiable AI decisions—aiming for “trustworthy specification” rather than a simplistic “password.”

- New panel participation on neurosymbolic AI across domains.

- Neurosymbolic pairing provides traceability and justification for AI recommendations.

- The goal is trustworthy specification, not magical solutions.

- Golden Sentence: “Neurosymbolic is the path that makes the most sense to provide justifiable traceability.”

Suggestions

- Build AI tools that assist requirements engineers but demand foundational knowledge of AI and requirements engineering.

- Establish scientific, evidence-driven decision maps to replace intuition.

- Create sandboxes with exemplars to make future capabilities tangible for stakeholders.

- Integrate ontology and rules to enable explainable expert systems for requirements co-piloting.

- Ensure workforce development plans accompany technology roadmaps toward 2035.

- Use executable demos and parallel simulations to align math with engineering intuition and communicate concepts to non-experts.

- Implement data authority checks in lifecycle dashboards to improve decision reliability.

- Adopt neurosymbolic AI to achieve traceable, justifiable decision recommendations.

- Translate models for non-expert domain stakeholders to facilitate effective decision-making.

- Baseline and measure process efficiency to quantify gains from AI-enabled tools.

AI Suggestions

- Core lesson: Apply AI-enabled, neurosymbolic co-pilots for requirements and decision-making in systems engineering. Start by building a small ontology-backed requirements co-pilot for a contained domain (e.g., a LEGO kit system) and integrate a simple ML component for text ingestion to gain hands-on experience with rules/ontology, ingestion pipelines, and an explainability layer that translates model outputs for non-experts.

- Core content of AI-enabled, neurosymbolic co-pilots: Purpose—assist human decisions; Components—ontology/rules + ML; Outputs—traceable, explainable recommendations; Practice—evidence-based “maps,” data authority checks, model translation for stakeholders.

- Extracurricular Resources:

- “Neurosymbolic AI: The Road to Robust AI” (overview and principles of integrating symbolic reasoning with neural networks): https://arxiv.org/abs/2104.00681

- “Model-Based Systems Engineering with SysML” (foundation for transitioning from documents to models): https://www.omg.org/spec/SysML

- “Requirements Engineering Fundamentals” (practical guidance on intent, constraints, and ambiguity management): https://www.ireb.org/en/requirements-engineering/faq/